GPT-4o Mini vs Gemini 1.5 Flash: AI Model Comparison

Explore the key differences between OpenAI and Google's latest cost-effective language models

GPT-4o Mini

: specialties & advantages

GPT-4o Mini is OpenAI's cost-efficient small model, designed to make advanced AI capabilities more accessible. It offers impressive performance at a fraction of the cost of larger models.

Key strengths include:

- Multimodal capabilities (text and vision)

- Large context window of 128K tokens

- Strong performance in reasoning tasks

- Improved efficiency and significantly lower cost compared to larger models

- Support for up to 16.4K output tokens per request

- Knowledge cutoff up to October 2023

GPT-4o Mini is particularly well-suited for applications requiring a balance between advanced capabilities and cost-effectiveness.

Best use cases for

GPT-4o Mini

Here are examples of ways to take advantage of its greatest stengths:

High-Volume Data Processing

GPT-4o Mini's large context window and efficiency make it ideal for processing full code bases or extensive conversation histories in applications.

Real-Time Customer Support

Its low latency and cost-effectiveness make GPT-4o Mini perfect for powering fast, real-time customer support chatbots.

Multimodal Applications

With support for both text and vision inputs, GPT-4o Mini is suitable for developing applications that require processing and understanding of multiple data types.

Gemini 1.5 Flash

: specialties & advantages

Gemini 1.5 Flash is Google's advanced language model, designed for fast performance and improved capabilities. It offers significant improvements over previous models at a competitive price point.

Key strengths include:

- Multimodal capabilities (text and vision)

- Massive context window of 1 million tokens

- Advanced reasoning and problem-solving abilities

- Extremely fast output speed

- Support for over 100 languages

- Knowledge cutoff up to November 2023

Gemini 1.5 Flash is particularly well-suited for applications requiring a balance between advanced capabilities, speed, and cost-effectiveness.

Best use cases for

Gemini 1.5 Flash

On the other hand, here's what you can build with this LLM:

Large-Scale Information Processing

Gemini 1.5 Flash's massive context window makes it ideal for analyzing and processing large volumes of data, such as entire codebases or extensive documents.

Real-Time AI Applications

With its extremely fast output speed, Gemini 1.5 Flash excels in real-time applications like live customer support, instant content generation, and rapid data analysis.

Multilingual and Multimodal Tasks

Supporting over 100 languages and having multimodal capabilities, Gemini 1.5 Flash is perfect for diverse applications requiring language understanding and visual processing.

In summary

When comparing GPT-4o Mini and Gemini 1.5 Flash, several key differences emerge:

- Context Window: Gemini 1.5 Flash offers a much larger context window (1 million tokens) compared to GPT-4o Mini (128K tokens), allowing for processing of significantly larger data volumes.

- Speed: Gemini 1.5 Flash has a faster output speed at 163.6 tokens per second, compared to GPT-4o Mini's 86.8 tokens per second.

- Latency: GPT-4o Mini has lower latency with a Time to First Token (TTFT) of 0.45 seconds, while Gemini 1.5 Flash has a TTFT of 1.06 seconds.

- Cost: Gemini 1.5 Flash is more cost-effective, with a blended price of $0.53 per million tokens, compared to GPT-4o Mini's $0.15 for input and $0.60 for output per million tokens.

- Performance: Both models perform similarly on benchmarks, with GPT-4o Mini slightly outperforming Gemini 1.5 Flash on MMLU (82.0% vs 78.9% for 5-shot) and MMMU (59.4% vs 56.1%).

- Maximum Output: GPT-4o Mini can generate up to 16.4K tokens per request, while Gemini 1.5 Flash is limited to 8,192 tokens.

- Language Support: Gemini 1.5 Flash supports over 100 languages, while GPT-4o Mini's language support is not explicitly specified but is described as multilingual.

For most applications requiring a balance between advanced capabilities, speed, and cost-effectiveness, both models offer compelling options. Gemini 1.5 Flash may be preferable for tasks requiring extensive context processing or extremely fast output, while GPT-4o Mini might be better suited for applications needing lower latency or slightly higher performance on certain benchmarks.

Use Licode to build products out of custom AI models

Build your own apps with our out-of-the-box AI-focused features, like monetization, custom models, interface building, automations, and more!

Enable AI in your app

Licode comes with built-in AI infrastructure that allows you to easily craft a prompt, and use any Large Lanaguage Model (LLM) like Google Gemini, OpenAI GPTs, and Anthropic Claude.

Supply knowledge to your model

Licode's built-in RAG (Retrieval-Augmented Generation) system helps your models understand a vast amount of knowledge with minimal resource usage.

Build your AI app's interface

Licode offers a library of pre-built UI components from text & images to form inputs, charts, tables, and AI interactions. Ship your AI-powered app with a great UI fast.

Authenticate and manage users

Launch your AI-powered app with sign-up and log in pages out of the box. Set private pages for authenticated users only.

Monetize your app

Licode provides a built-in Subscriptions and AI Credits billing system. Create different subscription plans and set the amount of credits you want to charge for AI Usage.

Accept payments with Stripe

Licode makes it easy for you to integrate Stripe in your app. Start earning and grow revenue for your business.

Create custom actions

Give your app logic with Licode Actions. Perform database operations, AI interactions, and third-party integrations.

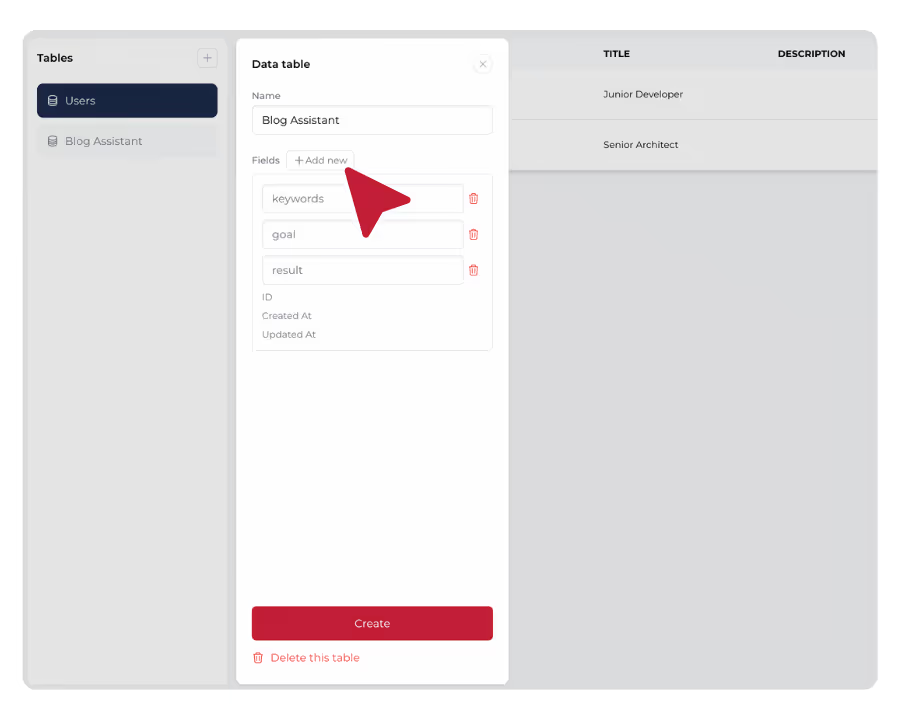

Store data in the database

Simply create data tables in a secure Licode database. Empower your AI app with data. Save data easily without any hassle.

Publish and launch

Just one click and your AI app will be online for all devices. Share it with your team, clients or customers. Update and iterate easily.

Browse our templates

StrawberryGPT

StrawberryGPT is an AI-powered letter counter that can tell you the correct number of "r" occurrences in "Strawberry".

AI Tweet Generator

An AI tool to help your audience generate a compelling Twitter / X post. Try it out!

YouTube Summarizer

An AI-powered app that summarizes YouTube videos and produces content such as a blog, summary, or FAQ.

Don't take our word for it

I've built with various AI tools and have found Licode to be the most efficient and user-friendly solution. In a world where only 51% of women currently integrate AI into their professional lives, Licode has empowered me to create innovative tools in record time that are transforming the workplace experience for women across Australia.

Licode has made building micro tools like my YouTube Summarizer incredibly easy. I've seen a huge boost in user engagement and conversions since launching it. I don't have to worry about my dev resource and any backend hassle.

Other comparisons

FAQ

What are the main differences in capabilities between GPT-4o Mini and Gemini 1.5 Flash?

The main differences in capabilities between GPT-4o Mini and Gemini 1.5 Flash are:

- Context Window: Gemini 1.5 Flash has a much larger context window (1 million tokens) compared to GPT-4o Mini (128K tokens).

- Speed: Gemini 1.5 Flash has a faster output speed (163.6 tokens/s) compared to GPT-4o Mini (86.8 tokens/s).

- Latency: GPT-4o Mini has lower latency with a TTFT of 0.45s, while Gemini 1.5 Flash has a TTFT of 1.06s.

- Maximum Output: GPT-4o Mini can generate up to 16.4K tokens per request, while Gemini 1.5 Flash is limited to 8,192 tokens.

- Language Support: Gemini 1.5 Flash explicitly supports over 100 languages, while GPT-4o Mini's language support is less clearly defined.

- Performance: GPT-4o Mini slightly outperforms Gemini 1.5 Flash on some benchmarks like MMLU and MMMU.

Which model is more cost-effective for general-purpose tasks?

For general-purpose tasks, Gemini 1.5 Flash is more cost-effective:

- Gemini 1.5 Flash has a blended price of $0.53 per million tokens ($0.35 for input, $1.05 for output).

- GPT-4o Mini costs $0.15 per million input tokens and $0.60 per million output tokens.

- For most use cases, Gemini 1.5 Flash will be the more economical choice, especially for applications with a high volume of token processing.

- However, the cost difference is not as significant as with some other model comparisons, so other factors like performance and specific capabilities should also be considered when making a choice.

How do the models compare in terms of performance benchmarks?

GPT-4o Mini and Gemini 1.5 Flash perform similarly on various benchmarks, with GPT-4o Mini having a slight edge:

- MMLU (Massive Multitask Language Understanding): GPT-4o Mini scores 82.0% (5-shot) compared to Gemini 1.5 Flash's 78.9% (5-shot).

- MMMU (Massive Multitask Multimodal Understanding): GPT-4o Mini scores 59.4%, while Gemini 1.5 Flash scores 56.1% (0-shot).

- HumanEval: GPT-4o Mini achieves 87.2% (0-shot), outperforming Gemini 1.5 Flash's 74.3% (0-shot).

- HellaSwag: Gemini 1.5 Flash scores 86.5% (10-shot), while this benchmark is not available for GPT-4o Mini.

These benchmarks suggest that GPT-4o Mini performs slightly better or on par with Gemini 1.5 Flash across various language understanding, reasoning, and knowledge-based tasks. However, the differences are relatively small, and real-world performance may vary depending on the specific use case.

What are the key factors to consider when choosing between GPT-4o Mini and Gemini 1.5 Flash for a project?

When choosing between GPT-4o Mini and Gemini 1.5 Flash for a project, consider the following factors:

- Context Length: If your project requires processing very large documents or extensive conversation histories, Gemini 1.5 Flash's larger context window (1M tokens) may be advantageous.

- Speed Requirements: For applications needing extremely fast output, Gemini 1.5 Flash's higher token generation speed may be preferable.

- Latency Sensitivity: If your application requires very low latency for the first response, GPT-4o Mini's lower TTFT might be more suitable.

- Budget: While both models are cost-effective, Gemini 1.5 Flash is generally cheaper, especially for high-volume applications.

- Performance Requirements: Consider the slight performance edge of GPT-4o Mini on certain benchmarks if your application aligns with these tasks.

- Maximum Output Length: If your application needs to generate longer responses in a single request, GPT-4o Mini's higher maximum output (16.4K tokens) might be beneficial.

- Language Diversity: For applications requiring support for a wide range of languages, Gemini 1.5 Flash's explicit support for over 100 languages may be an advantage.

- API Integration: Consider the ease of integration with your existing infrastructure and the specific API features offered by OpenAI (for GPT-4o Mini) or Google (for Gemini 1.5 Flash).

Evaluate these factors based on your project's specific requirements, balancing the need for advanced capabilities with cost-effectiveness, speed, and scalability.

How many AI models can I build on my app?

You can build as many models as you want!

Licode places no limits on the number of models you can create, allowing you the freedom to design, experiment, and refine as many data models or AI-powered applications as your project requires.

Which LLMs can we use with Licode?

Licode currently supports integration with seven leading large language models (LLMs), giving you flexibility based on your needs:

- OpenAI: GPT 3.5 Turbo, GPT 4o Mini, GPT 4o

- Google: Gemini 1.5 Pro, Gemini 1.5 Flash

- Anthropic: Claude 3 Sonnet, Claude 3 Haiku

These LLMs cover a broad range of capabilities, from natural language understanding and generation to more advanced conversational AI. Depending on the complexity of your project, you can choose the right LLM to power your AI app. This wide selection ensures that Licode can support everything from basic text generation to advanced, domain-specific tasks such as image and code generation.

Do I need any technical skills to use Licode?

Not at all! Our platform is built for non-technical users.

The drag-and-drop interface makes it easy to build and customize your AI tool, including its back-end logic, without coding.

Can I use my own branding?

Yes! Licode allows you to fully white-label your AI tool with your logo, colors, and brand identity.

Is Licode free to use?

Yes, Licode offers a free plan that allows you to build and publish your app without any initial cost.

This is perfect for startups, hobbyists, or developers who want to explore the platform without a financial commitment.

Some advanced features require a paid subscription, starting at just $20 per month.

The paid plan unlocks additional functionalities such as publishing your app on a custom domain, utilizing premium large language models (LLMs) for more powerful AI capabilities, and accessing the AI Playground—a feature where you can experiment with different AI models and custom prompts.

How do I get started with Licode?

Getting started with Licode is easy, even if you're not a technical expert.

Simply click on this link to access the Licode studio, where you can start building your app.

You can choose to create a new app either from scratch or by using a pre-designed template, which speeds up development.

Licode’s intuitive No Code interface allows you to build and customize AI apps without writing a single line of code. Whether you're building for business, education, or creative projects, Licode makes AI app development accessible to everyone.